Another interesting case in demand forecasting is the high frequency data. For example, if you work with demand on daily level, you might notice that demand increases every Monday but also exhibits proper seasonal fluctuations (e.g. decline every Winter). What do you do in this case?

One of the solutions (old but gold) is the multiple seasonal ETS model, which was originally developed by James Taylor (2003) for the pure additive exponential smoothing. The idea was quite simple: to model multiple seasonal cycles, one can add multiple seasonal components, i.e. to capture the day-of-week (frequency 7) and the day-of-year (frequency 365) effects. While it worked fine for some examples, the main issue with it has been its computational speed (or rather slowness): the original ETS needs to estimate all smoothing parameters + all the initial values for seasonal indices and other components. Both ADAM and ES in the smooth package support multiple seasonalities and avoid the whole issue by using a different model initialisation called “backcasting”.

Here is a classical example from James’ paper on the half-hourly electricity demand (see the image in the post). It is clear that there is a half-hour-of-day and the day-of-week effects. In ES, this means that we need to provide the vector for the lags variable:

from smooth import ES from fcompdata import taylor # Fit ES with automatic ETS model selection model = ES(lags=[48, 336], h=336, holdout=True) model.fit(taylor.y) model.predict(h=336) print(model)

This is the output I get from the function:

Time elapsed: 2.03 seconds

Model estimated using ES() function: ETS(MNM)

With backcasting initialisation

Distribution assumed in the model: Normal

Loss function type: likelihood; Loss function value: 25391.1773

Persistence vector g:

alpha gamma1 gamma2

0.2899 0.1283 0.5270

Sample size: 3696

Number of estimated parameters: 4

Number of degrees of freedom: 3692

Information criteria:

AIC AICc BIC BICc

50790.3546 50790.3654 50815.2146 50815.2591

Forecast errors:

ME: 829.1195; MAE: 942.1447; RMSE: 1065.1127

sCE: 941.5012%; Asymmetry: 9.2%; sMAE: 3.1841%; sMSE: 0.1296%

MASE: 1.4491; RMSSE: 1.1286; rMAE: 0.1408; rRMSE: 0.1300

The computational time on this data was only 2.03 second. In this time, the function tried several possible ETS models and selected the best one based on the AICc value. The resulting best model is ETS(M,N,M), which makes perfect sense for this data.

Is there a way to improve this model? Yes! Taylor mentions that adding AR(1) to the cocktail tends to improve the accuracy in case of multiple seasonal series. We can try that if we switch to ADAM:

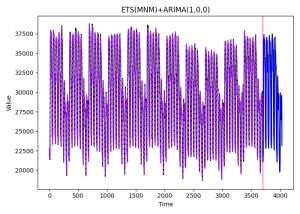

from smooth import ADAM # Fit ADAM ETS(MNM)+AR(1) model model = ADAM(model="MNM", ar_orders=1, lags=[48, 336], h=336, holdout=True) model.fit(taylor.y) print(model) model.plot(7)

Here is the output:

Time elapsed: 1.04 seconds

Model estimated using ADAM() function: ETS(MNM)+ARIMA(1,0,0)

With backcasting initialisation

Distribution assumed in the model: Gamma

Loss function type: likelihood; Loss function value: 24157.2473

Persistence vector g:

alpha gamma1 gamma2

0.1097 0.2225 0.3481

ARMA parameters of the model:

Lag 1

AR(1) 0.6852

Sample size: 3696

Number of estimated parameters: 5

Number of degrees of freedom: 3691

Information criteria:

AIC AICc BIC BICc

48324.4947 48324.5109 48355.5697 48355.6365

Forecast errors:

ME: 276.4061; MAE: 462.5092; RMSE: 588.5957

sCE: 313.8711%; Asymmetry: 2.1%; sMAE: 1.5631%; sMSE: 0.0396%

MASE: 0.7114; RMSSE: 0.6237; rMAE: 0.0691; rRMSE: 0.0719

The resulting model has lower AICc, but also produces more accurate point forecasts (compare RMSSE values) for the holdout set. The following image shows the data and the point forecasts for it:

What else can we do here? Actually, quite a lot: multistep losses, seasonal ARIMA, explanatory variables – things can get only more complicated from here. Have a look at this.

Do I hear someone shouting “TBATS”? TBATS is the exponential smoothing with additional bells and whistles (ETS + adapted Fourier terms + ARMA errors). I don’t have it as a separate function in the smooth just yet, but you can reproduce it, for example, like this.

So, what are you waiting for? Dive in and see how it works for yourself!

Install smooth: pip install smooth