Many of you have seen this image on the Internet — I’ve seen it myself a few times on LinkedIn lately. People say it depicts the “Dunning-Kruger” effect… But did you know this is actually an internet meme with little to do with the original paper?

Here is one of the recent examples, a screenshot of the post of Fotios Petropoulos about the effect.

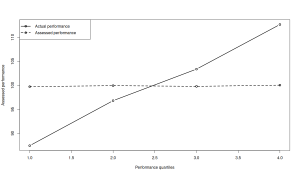

In the original paper, Kruger and Dunning (1999) ran experiments with undergraduates on humour, logical reasoning, and grammar. Participants completed a test and estimated their percentile rank. The authors then sorted participants into four quartiles by actual performance and computed averages for actual and self-assessed performance for each quartile. The plots in their paper – the real Dunning–Kruger effect – are just four data points per line, not a smooth curve over a learning journey (second image).

What did they find? People in the bottom quartile substantially overestimated their performance, often believing they were average or above. Top performers slightly underestimated their standing. The key finding is an asymmetry in miscalibration: low performers overestimate, high performers slightly underestimate.

This has almost nothing to do with the popular “experience vs. confidence” image. The original X‑axis is performance quartile at a single point in time; the meme’s X‑axis is a vague notion of “experience” through time. The original Y‑axis is the assessed test percentile; the meme’s is a free‑floating “confidence” construct. In the actual data, perceived performance increases with actual performance – there is no early spike, no “valley of despair,” no “slope of enlightenment.” That swooping curve is an internet-era graphic never reported by Kruger and Dunning, and it misleadingly frames the effect as a personal development trajectory the paper never studied.

There is also a serious critique of the original paper from statistical point of view. For example, Gignac and Zajenkowski (2020) showed that sorting people into quartiles and plotting average self-assessment against average performance can, by itself, generate the characteristic pattern – purely as a statistical artefact. In their own empirical data, miscalibration was roughly constant across ability levels, consistent with measurement noise rather than a special cognitive deficit in low performers. You can actually reproduce the pattern using two random uncorrelated variables. Here is a simple example in R:

set.seed(41)

x <- rnorm(10000, 100, 10)

y <- rnorm(10000, 100, 10)

plot(x,y)

xQ <- quantile(x)

yQ <- quantile(y)

yMeans <- xMeans <- vector("numeric",4)

for(i in 1:4){

xMeans[i] <- mean(x[xxQ[i]])

yMeans[i] <- mean(y[xxQ[i]])

}

plot(1:4, xMeans, type="b", ylim=range(xMeans,yMeans),

xlab="Real performance", ylab="Assessed performance",

lwd=2)

lines(yMeans, lwd=2, lty=2)

points(yMeans, lwd=2)

legend("topleft",

legend=c("Actual performance", "Assessed performance"),

lwd=2, lty=c(1,2), pch=1)

Which produces the image like this:

If you introduce a correlation between the two variables, the images starts looking even more similar to the ones from the original paper.

So there might be a real effect – many follow-up studies have measured it with more rigorous tools – but Dunning and Kruger’s method was not the right one to establish it. And that image with experience vs confidence is just a meme and a serious misconception that should not be used.

P.S. If you wonder who the “leading expert” that Fotios Petropoulos refers to in his post is – it’s me. Not sure why he doesn’t tag me properly.