1.1 What is model?

Reality is complex. Everything is connected with everything, and it is difficult to isolate an object or a phenomenon from the other objects or phenomena and their environment. Furthermore, due to this complexity of reality, we cannot work with itself, we need to simplify it, leave only the most important parts and analyse them. This process of simplification implies that we create a model of reality, work with it and make conclusions about the reality based on it.

Pidd (2010) defines model as “an external and explicit representation of part of reality as seen by the people who wish to use that model to understand, to change, to manage and to control that part of reality”. Let us analyse this definition.

- It is external and explicit, because if you only think about something, it is not a model. The unclear view on an object is not a model, it needs to be formulated.

- It is a representation of part of reality, because it is not possible to represent the reality at full - it is too complex, as discussed above.

- Technically speaking, the model without a purpose is still a model, but Pidd (2010) points out in his definition that without it, the model becomes useless (thus “to understand, to change, to manage and to control”).

- Finally, as seen by people is an important element that shows that models are always subjective. One and the same question can be answered with different models based on preferences of analyst.

This definition is wide and covers different types of models, starting from simple graphical ones and ending with complex imitations. In fact, there are four fundamental types of models (they are ordered by the increase of complexity):

- Textual,

- Visual,

- Mathematical,

- Imitation.

Textual model is just a description of an object or a process. An instruction of how to assemble a chair is an example of a textual model. Any classification will be a textual model as well, so the list of four types of models is a textual model.

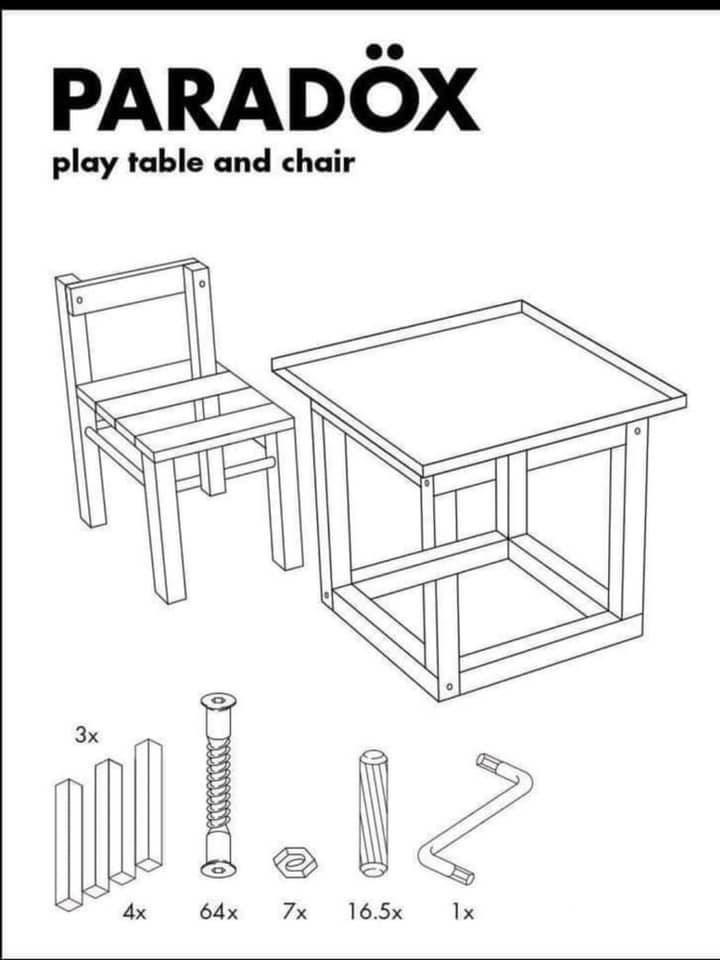

Visual model is a graphical or a schematic representation of an object or a process. An example of such a model is provided in Figure 1.1.

Figure 1.1: Chair assembly instruction. Found on Reddit.

Mathematical model is a model that is represented using equations. It is more complex than the previous two, because it requires an understanding of mathematics. At the same time it can be more precise than the previous two models in terms of capturing the structure of reality and making predictions about it. The mass-energy equivalence equation is an example of such model: \[\begin{equation*} E = m c^2 . \end{equation*}\]

A mathematical model in turn can be either deterministic or stochastic. The former one assumes that there is no randomness in it, while the latter implies that the randomness exists and can be modelled in one way or another. The related classification of models based on the amount of randomness is:

- White box - the deterministic model. An example of such model is a linear programming, which assumes that there is no randomness in the data;

- Grey box - the model that assumes some randomness, but for which the structure is known (or assumed). Any statistical model can be considered as a grey box: typically, we have an understanding of how elements in it interact with each other and how the result is obtained;

- Black box - the model with randomness, for which we do not know what is happening inside. An example of such model is an artificial neural network.

Finally, the imitation model is a simplified reproduction of a real object or a process. This can be, for example, a physical model of a building standing in a room of an architect, or a mental arrangement in psychology.

In these lecture notes, we will deal with only first three types of models, focusing on the third one.

When constructing mathematical models, we will inevitably deal with variables, with factors that are potentially related to each other and reflect some aspects of the real object or phenomenon. These variables can be split into two categories:

- Input, or external, or exogenous, or explanatory variables - those that are provided to us and are assumed to impact the variable (or several variables) of interest;

- Output, or internal, or endogenous, or response variable(s) - the variable of the main interest, which is assumed to be related by a set of explanatory variables.

The models that have only one response variable are called “univariate” models. But in some cases, we might have several response variables (for example, sales of several similar products). We would then deal with a multivariate model. In the literature, you might meet a different definition of univariate / multivariate models. For example, some consider a model with several variables multivariate, even if it has only one response and several explanatory ones. But throughout these lecture notes we use the definitions above, focused on response variable.

1.1.1 Models, methods et al.

There are several other definitions that will be useful throughout these lecture notes:

- Statistical model (or ‘stochastic model’, or just ‘model’ in these lecture notes) is a ‘mathematical representation of a real phenomenon with a complete specification of distribution and parameters’ (Svetunkov and Boylan, 2019). Very roughly, the statistical model is something that contains a structure (defined by its parameters) and a noise that follows some distribution.

- True model is the idealistic statistical model that is correctly specified (has all the necessary components in correct form), and applied to the data in population. By this definition, true model is never reachable in reality, but it is achievable in theory if for some reason we know what components and variables and in what form should be in the model, and have all the data in the world. One important element of the true model is that the error term in it cannot have any structure and thus has zero expectation. The notion itself is important when discussing how far the model that we use is from the true one.

- Estimated model (aka ‘applied model’ or ‘used model’) is the statistical model that was constructed and estimated on the available sample of data. This typically differs from the true model, because the latter is not known. Even if the specification of the true model is known for some reason, the parameters of the estimated model will differ from the true parameters due to sampling randomness, but will hopefully converge to the true ones if the sample size increases.

- Data generating process (DGP) is an artificial statistical model, showing how the data could be generated in theory. This notion is utopic and can be used in simulation experiments in order to check, how the selected model with the specific estimator behave in a specific setting. In real life, the data is not generated from any process, but is usually based on complex interactions between different agents in a dynamic environment. Note that I make a distinction between DGP and true model, because I do not think that the idea of something being generated using a mathematical formula is helpful. Many statisticians will not agree with me on this distinction.

- Forecasting method is a mathematical procedure that generates point and / or interval forecasts, with or without a statistical model (Svetunkov and Boylan, 2019). Very roughly, forecasting method is just a way of producing forecasts that does not explain how the components of time series interact with each other. It might be needed in order to filter out the noise and extrapolate the structure.

Mathematically in the simplest case the true model can be presented in the form: \[\begin{equation} y_t = \mu_{y,t} + \epsilon_t, \tag{1.1} \end{equation}\] where \(y_t\) is the actual observation, \(\mu_{y,t}\) is the structure in the data and \(\epsilon_t\) is the noise with zero mean, unpredictable element, which arises because of the effect of a lot of small factors and \(t\) is the time index. An example would be the daily sales of beer in a pub, which has some seasonality (we see growth in sales every weekends), some other elements of structure plus the white noise (I might go to a different pub, reducing the sales of beer by one pint). So what we typically want to do in forecasting is to capture the structure and also represent the noise with a distribution with some parameters.

When it comes to applying the chosen model to the data, it can be presented as: \[\begin{equation} y_t = \hat{\mu}_{y,t} + e_t, \tag{1.2} \end{equation}\] where \(\hat{\mu}_{y,t}\) is the estimate of the structure and \(e_t\) is the estimate of the white noise (also known as “residuals”). As you see even if the structure is correctly captured, the main difference between (1.1) and (1.2) is that the latter is estimated on a sample, so we can only approximate the true structure with some degree of precision.

If we generate the data from the model (1.1), then we can talk about the DGP, keeping in mind that we are talking about an artificial experiment, for which we know the true model and the parameters. This can be useful if we want to see how different models and estimators behave in different conditions.

The simplest forecasting method can be represented with the equation: \[\begin{equation} \hat{y}_t = \hat{\mu}_{y,t}, \tag{1.3} \end{equation}\] where \(\hat{y}_t\) is the point forecast. This equation does not explain where the structure and the noise come from, it just shows the way of producing point forecasts.

In addition, we will discuss in these lecture notes two types of models:

- Additive, where (most) components are added to one another;

- Multiplicative, where the components are multiplied.

(1.1) is an example of additive error model. A general example of multiplicative error model is: \[\begin{equation} y_t = \mu_{y,t} \varepsilon_t, \tag{1.4} \end{equation}\] where \(\varepsilon_t\) is some noise again, which in the reasonable cases should take only positive values and have mean of one. We will discuss this type of model later in the textbook. We will also see several examples of statistical models, forecasting methods, DGPs and other notions and discuss how they relate to each other.

Remark. Throughout these lecture notes we will use index \(t\) to denote the time and index \(j\) to denote the cross-sectional elements. So, for example, \(y_t\) will mean the response variable changing over time, while \(y_j\) will mean the response variable changing from one object to another (for instance, from one person to another).